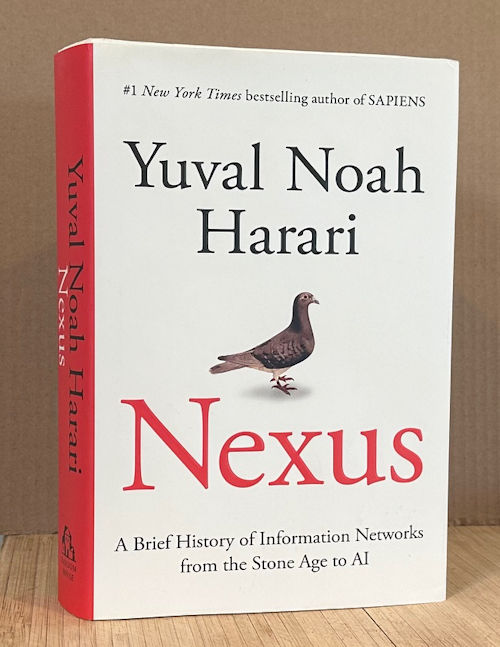

Subtitled: A Brief History of Information Networks from the Stone Age to AI

(Random House, Sept. 2024, xxxii + 492pp, including 88pp of acknowledgements, notes, and index; but no bibliography)

This is Harari’s fourth big book, following SAPIENS, HOMO DEUS, and 21 LESSONS FOR THE 21ST CENTURY. The subject of this one may seem a little more abstruse than the others, and maybe it is, but in fact the book is chock full of interesting stuff and typical askance Harari insights.

As usual, I’ll boil down key points and plant them here. Actually, I’ll two levels of key points. Then below those, I’ll data-dump the 12,000 notes I took on the book as I read it, with some pertinent quotes along the way.

\\\

Key Points, 50,000 foot level:

- Information is not just statements about reality; it includes stories and errors and fantasies. There are both naive (more information is better) and populist (information as power) views of information; neither is correct. Stories enable cultures to grow; documents enabled bureaucracies and the further expansion of cultures.

- Errors are inevitable. Self-correcting mechanisms were created in support of what became science; religion lacks these, and has splintered into countless sects.

- Computers and AI increase engagement of all sorts of information, and can create their own fantasies.

- Information is used differently in democracies, which have self-correcting mechanisms [[ balance of powers, the vote ]], while totalitarian systems prefer to route all information through a central hub, making them more fragile and especially vulnerable to control by AIs.

- If humans are so smart, why are we so self-destructive? Because our information networks privilege order over truth.

\

Key Points, 10,000 foot view

Part I is about Human Networks

- Basic claims: information is not the same as truth, or statements about reality. Information can include “errors, like, and fictions.” The ‘naive view of information’ is that more information leads to better accuracy; the ‘populist view of information’ is that it’s a weapon to gain power, as if there is no object truth. This book is about the middle ground.

- [[ Comment: his ‘naive’ view seems to correspond with science, where increased accuracy is the goal. But he’s using ‘information’ is a broader sense. ]]

- Information forms a social nexus, not a representation of reality. Thus the success of astrology, and the Bible.

- The first information technology is: the story.

- Stories were instrumental in the formation of large cultures. Early humans didn’t form bonds of more than a few hundred individuals. Our networks became larger, since about 70,000 years ago, because of our aptitude to tell and believe fictional stories. Jesus, for example, wasn’t a lie; his story was the result of emotional projection and wishful thinking.

- The second information technology: the written document.

- And these enabled the formation of bureaucracies, and deep states, that are essential for maintaining modern societies.

- Yet people are comfortable only with ‘biological dramas’ — stories about relationships among people — and bureaucracies seem to them suspicious, like conspiracies.

- Religion solved this by simply declaring Holy Books to be free of error. Several sections discuss the history of the Bible: how some ancient texts were included, others excluded, how some were canonized as the word of God and others were not, how copyist errors slipped in, how later commentaries were themselves declared canonical, and so on, that eventually led to schisms between what became separate churches. Declaring texts infallible never worked.

- The scientific revolution got going by discovering that errors are inevitable, and developing self-correcting mechanisms to learn from mistakes.

- These two reactions map to the histories of totalitarianism and democracy, and the absence of self-correcting mechanisms in the former. Populism is at odds with democracy, but it simplifies reality.

Part II is about the Inorganic Network

- Computers, since the 1940s. New information networks emerge. Everyone can be monitored, all day.

- To increase engagement, algorithms and AI play to the biases of human nature, thus ramping up outrage and misinformation.

- All higher goals are merely intersubjective inventions; there’s no way to determine moral goals objectively. Potential solutions use deontology, or utilitarianism. Religions resort for future salvation (i.e. nothing in this world). But computers can create mythologies too.

- To many cultures, order is more important than truth.

Part III is about Computer Politics

- This is about how computers and AI are used differently in democracies vs. totalitarian societies.

- The former have strong self-correcting mechanisms, and ideally a guided by basic principles of benevolence, decentralization, mutuality, and room for both change and reset.

- Totalitarian societies, in contrast, prefer to control all information, routing it all through a central hub. Such regimes often rely on doublespeak (e.g. calling themselves democracies when they’re not).

- (The author mentions Trump only a few times, but his examples of how totalitarian societies work clearly match many of the things Trump’s administration is doing, e.g. shutting down agencies, suppressing official reports, etc.)

- Totalitarian societies claim themselves infallible, and so lack self-correcting mechanisms, and so are potentially more at risk from control by AIs.

- People remain stuck on biological dramas; we need artists who can explain bureaucracies (and the like) in accessible and entertaining ways. [[ Loosely, this is what science fiction does: it concerns things besides biological dramas, which is why some critics dismiss it as subliterary. ]]

- Cooperation among societies doesn’t mean losing local traditions; that’s a false binary. Globalism just means commitments to global rules, as with the World Cup.

- But is competition possible, or is life always about competition for power? There’s plenty of evidence that cooperation is possible. The only constant of history is change.

- Politics is largely a matter of priorities, relying on historical narratives. Now AI can change how information flows. We can acknowledge mistakes, embrace new ideas, and revise the stories we believe.

- The question asked at the beginning was: if humans are so smart, why are we so self-destructive? And the answer is: because our information networks privilege order over truth…

\\\

Detailed Notes

Prologue, p. xi [[ 22pp in all ]]

If our species is so wise, why are we so self-destructive? And, if we’ve accumulated so much information, why don’t we have the answers to the big questions: who are we? What should we aspire to? What is the good life, and how should we live it? We are “susceptible” as ever to “fantasy and delusion.” (xii .1) Many traditions believe in some fatal flaw that we can’t overcome. Phaethon; the sorcerer’s apprentice. What do we do? Faith in gods or sorcerers is dangerous, because those are invented by humans too. The myths depict power coming to a single person, but in reality power comes from the cooperation of large numbers of humans. So it’s not individual psychology. Why do human societies entrust power to their worst members? Hitler. It has to do with how our species cooperates in large numbers. We gain power through networks of cooperation, but the way these networks are built inclines us to use power unwisely. Main argument, xiv.3 – a network problem:

It is an information problem. Information is the glue that holds networks together. But for tens of thousands of years, Sapiens built and maintained large networks by inventing and spreading fictions, fantasies, and mass delusions — about gods, about enchanted broomsticks, about AI, and about a great many other things.

That’s how we got to Nazism and Stalinism. It didn’t matter they were built on lies. They might have won, and a new totalitarian regime may yet emerge in the 21st century.

p.xv, The Naïve View of Information. This view argues that by gathering information into big networks, they can better achieve understanding of medicine and so on than individuals can. Information leads to truth, which leads to both power and wisdom. Mistakes can be made, but by gathering even more information they can be corrected. More info leads to better accuracy. Even disagreements about values can be examined with more or better information. Racists, e.g., are just ill-informed. Thus the pursuit of more information technologies is a good thing. Examples from Reagan and Obama. Similarly Zuckerberg and Kurzweil. And Google’s mission statement. (Several references to Kurzweil’s 2024 The Singularity is Near.)

p.xix, Google versus Goethe. The are many cases in which having more info has led to a better world. Child mortality. Examples in Goethe’s family. 25% childhood survival rate. Now it’s 95 or 99%. Yet the vast accumulation of modern data hasn’t made humanity any safer. Now AI is coming; will that help? Andreessen and Kurzweil say yes. Others are skeptical. AI could make human conflicts worse. Or humans might be divided from AI. AI can make decisions all by itself. And generate new ideas by itself.

Xxiii, Weaponizing Information. Homo Deus in 2016 argued that the hero of history was information, not Homo sapiens. Everything is information networks. Since then the pace of change has accelerated. Some of that book’s ideas have become realities in 2024. Other things have changed, including populist movements, and conspiracy theories, that argue that traditional institutions are simply lying. Why? To gain power. This is the populist view of information, p. xxiv. Information as a weapon. Some posit that there is no objective truth, only power. These ideas aren’t new. Plato, Foucault, Said. Marx. History is a power struggle between oppressors and oppressed. Thus Trump and Bolsonaro share Marxist views. The people vs the elites. Are populists then lying to gain power? [as MAGA is] Some respond to ‘do your own research’. How would this result in cooperation to build large-scale institutions? Xxvii top. A populist alternative is to retreat to divine revelation or mysticism. Trust the Bible or the Quran for absolute truth. Or in a charismatic leader. This erosion of institutions is proceeding as existential threats loom, including ecological collapse, global war, and out-of-control technology. To avoid this fate, we need to better understand what information is and how it relates to truth and power. Both the naïve and populist views of information are not to be relied on. This book is about the middle ground.

Xxviii, The Road Ahead. (Summary of future chapters.) Historical development. Part 1: Mythology and bureaucracy. Both necessary, but pulling in different directions. E.g. Christian church, which split into rival churches with different balances between these two. Ch4, Then, erroneous information and self-correction mechanisms, weak v. strong (church v. science). Ch5, Then, distributed vs centralized information networks. Democratic vs totalitarian systems.

History is the study not of the past, but of change. [[ this is the alliance of history with sf ]] We’ll study how the Bible was canonized. And so on. The book’s second part looks at new information networks being created today. How AI is different. By being inorganic. Which need never sleep. The third part of the book deals with computer politics, dealing with threats of information networks. How democracies might react, how totalitarian system might do so. And how AI might influence the balance of power between these two.

Begin with: what is information?

Part I: Human Networks.

Ch1, What Is Information?, p3

Many see information as more elementary even than matter and energy. There is no universal definition of information. A common definition involves symbols or spoken words. Example of the Lost Battalion, and a pigeon named Cher Ami, in 1918. But the olive branch in the Noah story was also information. Many other things. It’s a matter of perspective. (Or context) Examples of information in combat. Information in one view aligns with truth. Reality. When it succeeds, we call it truth. Truth is an accurate representation of reality. (This is the naïve view of information, but author agrees that truth is an accurate representation of reality.) Yet most information in human society doesn’t represent anything.

P7, What Is Truth? Truth is something that accurately represents certain aspects of reality. Beneath this is the premise that one universal reality exists. People may have different beliefs, but they cannot possess contradictory truths, because they all share a universal reality. 7b. [[ here again is a key point. ]]

Yet truth and reality are different things, because any truth can never represent all of reality. Examples. A ‘truth’ can describe different realities, e.g. how many of which kind of soldiers among 10,000. Also, reality contains many viewpoints. Reality is complex. Reality includes objective facts that don’t depend on people’s beliefs. E.g., two sides of a war. Long example of Sarah Aaronsohn, the Palestinians, and the Jews. Or of any other issue. Complete accuracy would require a one-to-one account, e.g. a Borges story. Any truth can represent only a portion of reality.

P10, What Information Does. The naïve view includes the idea that misinformation and disinformation are problems, and assumes the solution is more information. On this point this book disagrees. Example of astronomy and astrology. Many countries still take astrology very seriously. That is, errors, likes, fantasies, and fictions are information too. Information has no necessary link to truth. Information creates new realities. It connects. Consider music. It doesn’t represent anything, yet connects many people.

Information puts things in formation. Consider DNA. (Details recall idea that genes don’t “know” what they’re doing, they only guide behaviors that happen to lead to survival, or whatever.) DNA isn’t true or false. But errors in DNA can create new realities.

So information connects different points in a network. Moon landing, hoax, music. Zuckerberg’s Metaverse. It’s entirely information. If only a pipe dream at the moment.

P15, Information in Human History. So information is a social nexus, not a representation of reality. Thus we can understand the success of astrology, and the Bible. The Bible makes many serious errors in its description of both human affairs and natural processes, 15.6. Genetic and archaeological evidence disputes its history. It depicts epidemics and divine punishment for sins. And yet, the Bible has been effective in connecting billions of people and creating religions. Religions can do many things individuals cannot.

So: information represents reality only sometimes. But it always connects. This isn’t to dismiss the notion of truth. Some information does connect people by accurately representing certain aspects of reality. But this requires special effort. Thus more information will not necessarily lead to a more truthful understanding of the world. Thus, history shows a rise a connectivity, but not in truthfulness or wisdom.

Contrary to what the naive view believes, Homo sapiens didn’t conquer the world because we are talented at turning information into an accurate map of reality. Rather, the secret of our success is that we are talented at using information to connect lots of individuals. Unfortunately, this ability often goes hand in hand with believing in lies, errors, and fantasies. This is why even technologically advanced societies like Nazi Germany and the Soviet Union have been prone to hold delusional ideas, without their delusions necessarily weakening them. Indeed, the mass delusions of Nazi and Stalinist ideologies about things like race and class actually helped them make tens of millions of people march together in lockstep.

We’ll see this history in chapters 2-5. And the first information technology is: the story.

[[ This matches the thesis that humanity succeeded not via tools, but via social skills that allowed larger and larger groups of people to cooperate. ]]

Ch2, Stories: Unlimited Connections, p18

Recap of earlier two books. The idea of cooperating in large numbers. At the same time, we don’t form long-term intimate bonds with more than a few hundred individuals. So how did our networks become so big? What changed about 70,000 years ago was we developed the aptitude to tell and believe fictional stories and to be deeply moved by them (19m). So humans didn’t have to know each other; they just had to know the same story. Billions could. Even great leaders are bound up in the story about them. Influencers and celebrities understand this; it’s called having a brand. Like Coca-Cola. As with that pigeon—parts of the story are doubtful. Such problems were forgotten; the story grew in the telling. As an extreme example, consider Jesus. So many stories now mask any attempt to recover the historical person. 22t. (ref to Bart Ehrman.) His story was not a lie; it was the result of emotional projects and wishful thinking, 22.8. The story had a much greater impact than the person. Shared stories build trust by making strangers seem part of our family. Ironically the last supper was at Passover, when Jews “remember” their personal experience of the escape from Egypt. Their souls were all there, say the sages. They all share a fake memory.

P24, Intersubjective Entities. Like DNA, stories can create new entities, a third level of reality. First we had objective reality and subjective reality. Third is intersubjective reality. Such things are laws, gods, nations, corporations, and currencies that exist in the nexus between larger numbers of minds. [[ This was the theme of Sapiens. ]] In the stories people tell. It is the stories that create these things. Consider pizzas, and the Loch Ness Monster. Believing in the latter doesn’t counter the evidence that it does not. States are such entities; consider Israel and Palestine. The US went through a phase where some accepted its existence and others denied it. Stories that create intersubjective realities have been most crucial for the development of large-scale human networks. …

P28, The Power of Stories. These stories gave Homo sapiens the edge over other animals, including Neanderthals. Humans developed larger and larger tribes. It enabled distant tribes to cooperate. This explanation is denied by Marxists, who focus on material interests and power. But the Crusades, World War I, and the Iraq War were about religious, national, and liberal ideals. Further, chimps also have material interests and seek social power; but they don’t have intersubjective identities. Among humans, conflicts are not just about brute power; rather we can change the stories people believe. Take Naziism as an example. They had a story. It didn’t help; they lost. And their story became one of liberal democracy. It’s too bad they didn’t make that shift earlier.

P31, The Noble Lie. But power doesn’t align with wisdom; information leading to truth and wisdom is the naïve view. In history, power stems partially from knowing the truth, and also to maintain social order among large numbers of people. Getting people to cooperate like this required a common story, an ideology. Telling the truth doesn’t solve political disagreements. Fiction does that, and fiction can be as simple as we like. p33m:

…what holds human networks together tends to be fictional stories, especially stories about intersubjective things like gods, money, and nations. When it comes to uniting people, fiction enjoys two inherent advantages over truth. First, fiction can be made as simple as we like, whereas the truth tends to be complicated, because the reality it is supposed to represent is complicated. Take, for example, the truth about nations. It is difficult to grasp that the nation to which one belongs is an intersubjective entity that exists only in our collective imagination. You rarely hear politicians say such things in their political speeches. It is far easier to believe that our nation is God’s chosen people, entrusted by the Creator with some special mission. This simple story has been repeatedly told by countless politicians from Israel to Iran and from the United States to Russia.

Further, the truth involves unpleasant facts, and no one wants to hear about those. Plato’s Republic imagined the “noble lie”.. 34.4. But this doesn’t mean that politicians are liars. It’s lying only when you pretend the fictional story is a true representation of reality. Thus the Constitution didn’t presume to be handed down from heaven; it was a creative legal fiction. It created order, if not fairness or justice. But it allowed itself to be amended. This makes it distinct from the Ten Commandments. Which still endorses slavery to this day. So some political systems admit they’re based on fictions, and some do not.

P36, The Perennial Dilemma. We can now present a more complex model of information networks. 37t. To survive and flourish, information networks need to both discover truth and create order. And the second part can include fiction, propaganda, lies. P37 diagrams. See 38t. Ignorance can be strength. Societies may place limits on the search for truth. Thus Darwin and evolution. Some societies prefer to sacrifice truth for the sake of order. Or limit research to just the fields that don’t threaten the social order. This is why the growth of human information networks hasn’t led to greater wisdom. It’s a tightrope walk balancing truth with order. This takes us to the second great information technology: the written document.

Ch3, Documents: The Bite of the Paper Tigers, p40

Stories have their limitations. Consider that the stories nations tell themselves have often been conceived by poets. Examples of those who inspired Israel. A founding poem by Bialik inspired the Jews to settle in Palestine—but the poet knew nothing about the peoples already there. As did works by Herzl. They relied on stories of the Bible. Other nations created their own myths. But myths don’t build cities; that required sewage systems, security, education, health care, and taxes to pay for them. Which in turn required all sorts of information collected and processed. Lists, tables, spreadsheets. Almost all institutions rely on lists, and accountants. Lists and stories are complementary. Lists are more boring, not easily remembered, unlike stories, even long ones. The Ramayana. Human memory is adept at retaining stories. (Note a Kendall Haven 2007 Story Proof.) For lists, we need written documents.

P45, To Kill a Loan. Documents go back to Mesopotamia, 2000 bc. But documents can contain mistakes. Yet documents create new realities. Ownership can be an intersubjective reality of a community, and documents make ownership easy to transfer. In one culture destroying a tablet ‘killed’ the loan it represented. Just as your dog eating a hundred-dollar bill destroys your money.

P48, Bureaucracy. But written documents created problems of storing and finding them. We retrieve things easily in our minds. We forage because there is organic order to the forest. For archives someone has to set up a system to organize the contents. That system or order is called bureaucracy. Yet bureaucracy can sacrifice truth for order, just like mythology.

P50, Bureaucracy and the search for truth. Dividing the world into drawers. But this order can be artificial. Forms can offer only limited options. Such distortions also affect science. Universities divide studies into separate disciplines. There’s the pressure to specialize. Examples. Consider biology, and the need to classify living organisms. Species change. They can interbreed. Genes can transfer horizontally. Viruses are especially complex. Life or not? Another intersubjective convention. As is the idea of an endangered species.

P54, The Deep State. How else to manage big networks? Holistic approaches don’t make a hospital function, or the sewage system work. They are deep states. Without them we had much more disease and death. Example of cholera in 1854. And how India is addressing the problem of toilet in the 2010s.

P56, The Biological Dramas. Yet bureaucracies have negative connotations. Because they’re hard for humans to understand. E.g. how we never know where our taxes go. Reliance on documents shifts authority. To administrators, accountants, lawyers. Artists have difficulty telling stories about them. 58.9: As rooted in our biology… These are the biological dramas. Consider the plot of the Ramayana. Relations between relatives. Parents and children; fear of being abandoned, 59m. Related: parental favoritism, sibling rivalry. Examples in other species. And in popular stories. Then there is romance, and romantic triangles. Third is tension between purity and impurity; the struggle to avoid pollution, 60.6. Disgust mechanisms. Especially traditional Hinduism, with a system of castes. Modern India. Additional classic biological dramas: who will be alpha? Us versus them. Good vs evil. These dramas inform almost all human art and mythology. And thus make it difficult to explain how bureaucracies work. Kafka pioneered new nonbiological plotlines. The Trial. Others: Catch-22. British TV. The Big Short. Yet most stories that attract us “are essentially Stone Age stories about the hero who fights the monster to win the girl.” 63m. [[ Again, this can tie to my description of simplistic sf, or space opera… higher-level sf comes from engagement with the external real world, not just the interactions of human beings. ]]

[[ Biological dramas are thus interplays among people expressing base human nature. Family relationship, purity and impurity, hierarchies, us vs. them. Thus fiction about bureaucracies is analogous to science fiction. ]]

[[ A good take on the crucial difference between different kinds of fiction. Classic and “mainstream” fiction is all about biological dramas. Character relationships; changes in character. Science fiction is about humanity’s interaction with the universe, and is dismissed by traditional literary critics precisely because it does not limit its focus to “biological dramas.” ]]

Citations of Behave by Sapolsky, Inheritance by Whitehouse.

P63, Let’s Kill All the Lawyers. A consequence of the difficulty in understanding bureaucracies is that they can seem like a malign conspiracy, even when they’re necessary and do good. Examples. Shakespeare: kill all the lawyers. In the 1381 Peasants’ Revolt, the archives at Cambridge were burned. Similar incidents, p65. An incident with the author’s grandfather, and hysteria over Jews. Many lost their citizenship.

P68, The Miracle Document. Bureaucracies, love them or hate them? The historical lesson is that merely increasing the quantity of information doesn’t help. AI will be both bureaucrats and mythmakers. But we have one more thing to consider: how truth can be sacrificed for the sake of order. And this involves how information networks deal with errors. Beginning with the idea of Holy books. They’re meant to include all the vital information society needs and be free from all possibility of error, 69.2

Ch4, Errors: The Fantasy of Infallibility, p70

The fallibility of human beings has played key roles in every mythology. Humans have long fantasized about some super machine that would correct their mistakes. This fantasy is dangerous. Past fantasies were called religions. 71t. The function of religions has been to establish a superhuman legitimacy for the social order.

P71, Taking Humans Out of the Loop. At the heart of every religion is the fantasy of a superhuman and infallible intelligence. Yet messages from the gods tend to contradict one another. Example from Harvey Whitehouse. The human messengers are always fallible. One attempt would be to establish religious authorities. Priests, oracles. But they’re human too. Then what?

P73, The Infallible Technology. Religious books are a technology to bypass human fallibility. They’re unlike other kinds of written texts in that there are many copies and they’re all supposed to be identical. They became a religious technology in the first millennium bce. But who decides what goes in the holy book? The wisest humans? Who selects them? But that’s how it was done.

P75, The Making of the Hebrew Bible. There was no Bible as a single book in biblical times. None of the Dead Sea Scrolls contain a Bible or indicate what should be in it. Some scrolls were incorporated into Genesis, but many scrolls were excluded from the Bible. Yet some sects do consider them part of their canon. Similarly Psalms. Centuries of debate among rabbis resolved various differences. They decided Genesis was the word of Jehovah, but things like Adam and Eve and Abraham were human fabrications. Psalms of King David were canonized, but not those of King Solomon. And so on. Some books mentioned in the Bible got lost, and weren’t canonized. Over time orthodoxy said the first part of the Bible was handed down by God to Moses. Once the contents of the holy book were sealed, copies were made and disseminated. This both democratized religion, and prevented anyone from meddling with the text.

P78, The Institution Strikes Back. Another problem with this canonization was dealing with the actual copyists. The rabbis devised strict rules. But copying errors crept in anyway. Second, various words had to be interpreted consistently. What is ‘work’? Rules for cooking young goats; milk and dairy. The world changes; how do the old rules apply to new situations? Examples. Rabbis wrote a new holy book to canonized their interpretation of the Bible: the Mishnah. Some came to believe *it* was the holy book handed down by Jehovah. And Jews argued about that book too. Then came the Talmud. And arguments about that. Ultimately the rabbis had authority. Things changed; technology developed; new questions arose. Today we have separate elevators for the Orthodox. Judaism has become an information religion, obsessed with texts and interpretations. As never happened in the Bible itself. Words in texts and their interpretations anticipated our ideas of an informational universe.

P84, The Split Bible. But there were multiple competing chains. Of canonizations. Dissenters were Christians. Only Jesus was the authority on the texts. They differed in the meanings of words, like almah, young woman or virgin. Different Christians claimed different interpretations, creating chaos. Examples p85. Other apocalypses, other gospels. Additional acts. More epistles. Eventually the New Testament was created. Some texts were included, others were left out. 367 CE. Then 393 and 397. Before that different groups had different lists of recommended texts. Examples. 86-87. We don’t know the reasons. But they had far-reaching effects on the churches. E.g. about the role of women in church. Most Christians forget that the New Testament was curated by church councils; they view it as the infallible word of God. They read what church officials told them to read, and so the church itself became a powerful human institution.

P89, The Echo Chamber. Struggles of authority led to schisms, e.g. the Western Catholic church, and the Eastern Orthodox church. Example of how Catholics rationalized the Sermon on the Mount to justify burning heretics. The church also controlled the distribution of books, and so could suppress anything it considered heretical. Early libraries had very few books. The church sought to lock society inside an echo chamber. They read Saint Paul and Augustine and Aquinas and that was about all.

P91, Print, Science, and Witches. This idea of bypassing human fallibility by declaring certain texts infallible never worked. Protestants tried it too; didn’t work. How to deal with human error then? The naïve view of information as leading to truth doesn’t work either. It’s wishful thinking. Consider the European print revolution, beginning about 1454. This freedom from the church led to the scientific revolution? Only in part. Print only faithfully reproduces texts—any texts, including religious fantasies, fake news, conspiracy theories. Example of the witch-craze hunt. Common beliefs in all cultures. Not so much in Europe—until the early 1400s. Witch hunts began. A global satanic conspiracy. One proponent published a guidebook for torturing and killing witches. Very misogynist. How witches stole men’s penises. The book was a bestseller in the 1500 and 1600s. The printing press played a key role. Mass hysteria led to execution of tens of thousands. Flimsy evidence. People were tortured until they confessed. Example of Munich in 1600.

P97, The Spanish Inquisition to the Rescue. Those accused would be tortured to name accomplices. In 1453 a French debunker was tortured and confessed and got life imprisonment. Witches had become an intersubjective reality, made real by the exchange of information about witches. A whole bureaucracy grew up. … It was a means for certain people to gain authority and for society as a whole to discipline its members, 98b. As the bureaucracy grew it became harder to dismiss it all as fantasy. [[ The same can be said about any conspiracy theory. ]] If everyone said it, it must be true. So the witch hunts went on. Examples. Long letter. A problem created by more and more information. A few at the time realized it. Yet the free market encouraged it. (Just as it does now.) So what really got the scientific revolution going?

P102, The Discovery of Ignorance. What was needed was curation to further the pursuit of truth, rather than simply power. Institutions that did this formed the bedrock of the scientific revolution. They weren’t universities. It was trust through associations like the Royal Society in London. And others. Institutions had self-correcting mechanisms that exposed and rectified errors. These were the key. In other words, the discovery of ignorance. It was assumed that errors are inevitable. Science is a team effort, not the result of lone geniuses or infallible books. Examples.

P104, Self-Correcting Mechanisms. These are the opposite of holy books. This is about learning from mistakes. Like learning to walk. Bodily processes. Institutions possess such mechanisms, or they don’t last long. The Catholic Church’s mechanisms are weak; it can’t admit mistakes. Examples of wiffle-waffling. Many religions assume that God kept church members free from error. Examples. Papal infallibility. The “deposit of faith.” The Church occasionally makes apologies for past behavior, but blame was laid on church members, not on scripture. Examples. Yet in fact, the Crusades and attacks on various people were justified through the scriptures. Examples. Its ‘interpretation’ of scriptures has changed. But the first rule is never to admit that church teachings have changed. Examples of Jews, women, homosexuals. To deny its infallibility would be to destroy its authority.

P110, The DSM and the Bible. In contrast, scientific institutions have strong self-correcting mechanisms. And they admit the institutional responsibility for mistakes. E.g. institutional racism. These mechanisms enable science to develop fairly rapidly. Psychiatry offers examples, as in the DSM. [[ Of course this change in knowledge is exactly what conservatives don’t like about it – such change is ‘woke.’ ]]

P111, Publish or Perish. And these institutions actively seek to expose error and ignorance. Religions incentivize to conform. They resist new ideas. Science works the other way around. Of course, scientists rely on others, e.g. how author read a book about witches, published by a trustworthy university press. Of course critics charge scientific institutions with stifling unorthodox views. Example of quasicrystals. Dan Schechtman. The self-correcting mechanisms of these institutions help overcome human bias. There have been exceptions; Soviet Union and Lysenko.

P116, The Limits of Self-Correction. These mechanisms are costly in terms of maintaining order. They undermine the myths that hold social order together. Undermining them could lead to worse orders. Scientific institutions leave maintaining order to other institutions – like the police. Democracies believe self-correcting mechanisms can be self-maintaining; dictatorships do not. So the question will be: will AI favor or undermine such self-correcting mechanisms?

[[ Author cites Ehrman’s books several times ]]

Ch5, Decisions: A Brief History of Democracy and Totalitarianism, p118

This considers how democracy and dictatorship work in terms of information networks, and how new technologies help different kinds of regimes flourish. (Note references to Graeber & Wengrow’s 2021 The Dawn of Everything here. On my shelf, but haven’t yet read it.)

Dictatorial information systems are highly centralized. Rome, Berlin, Moscow. Second, they assume the center is infallible. There are no self-correcting mechanisms. If any arise, dictators seek to neutralize them. [[ This is what’s happening in Trump’s America. ]]

A democracy is a distributed information network with strong self-correcting mechanisms. There is a central hub, but also a lot of interconnected nodes. People and communities decide things for themselves. As little as possible is decided by will of the majority. Yet all democracies provide basic functions, like security, health care, education, and welfare. Democracies assume that everyone is fallible, including those in the government. Thus separation of powers, regular elections, and so on. Yet certain decisions have to be made that affect everyone.

P121, Majority Dictatorship. So: democracy is about more than elections. Elections can be rigged. It’s not a majority dictatorship. A majority, no matter how big, can’t vote to send others to death camps. There have been examples in history. Others use democracy to undermine it—Putin, Orban, et al. They attack the self-correcting mechanisms: the courts and the media. And so on, 123m [[ again Trump ]] Supporters think winning the election gives them unlimited power. The majority can do many things, but it can’t touch human rights, or civil rights. …

P125, The People Versus the Truth. But countries can determine which rights belong in those two baskets. There are limits on everything, even right to life. Elections are not a method for discovering truth. Elections are a method for maintaining an order. And some people desire things other than the truth. Example of the Iraq war; there were no WMDs, nor did the Iraqis wish to be liberated. Examples of leaders accused of corruption, and climate change. Voters can decide whatever they want, but they can’t deny the truth. Discovering the truth is up to academic institutions, the media, and the judiciary. And multiple institutions can work in different ways, to check on each other.

P129, The Populist Assault. And so democracy is complicated. Dictatorships are easy. Most people don’t understand how institutions work. Populists attack them. Ironically populists end up placing all power in a single party or leader. If someone else wins an election, then it was stolen—they really believe that. People voting the other way are ‘wrong,’ have been deceived. Further, ‘the people’ is a unified mystical body, not an actual collection of individuals. Examples of the Nazis. And many groups deny that the people contain a diversity of opinions. [[ Just as Trump somehow believes those who oppose him “hate America” ]] Only people who support the leader are “the people.” Thus populism is at odds with democracy. Democracy does not entail political voting in other spheres, e.g. biology. Populists are therefore suspicious of institutions as threats to the will of the people. Power is the only reality; their view of the world is dark and cynical. 133t. Why is this view so appealing? It simplifies reality; everything is about elites pursuing power. Also, sometimes it’s correct. People are fallible, and some are crooked. That’s why self-correcting mechanisms are needed. Strongmen like populists because they provide a basis for being dictators while pretending to be democrats. They have rationales for controlling institutions. Why would populists trust the strongmen? Because of the mythology that the strongmen embody the people.

P134, Measuring the Strength of Democracies. Democracy isn’t just about elections; the strength of a democracy is about whether the government can rig elections and how safe is it for media outlets to criticize the government. It’s about conversations, and where they take place. Example North Korea. The US in contrast. People can attack the government all the time—but speeches in congress never change anyone’s mind. Those conversations took place elsewhere.

P136, Stone Age Democracies. [[ More references to Dawn of Everything in this section ]]. Ancient hunter-gatherers were relatively democratic—information networks were distributed and self-correcting mechanisms were in place. In bands of a few dozen people. Larger tribes of hundreds or thousands of people usually managed this too. Leaders of these groups had limited resources, or ability to control others’ lives. Unhappy members of the group could simply walk away. Following the agricultural revolution it became easier to manage the flow of information, and autocrats relied on bureaucracies. Democracies were still possible, as with Athens in 5th and 4th centuries bce. Only adult men could vote. But big kingdoms and empires were never democratic. Subject lands of Athens were not given the rights of Athenian men. Rome otoh did extend citizenship. Details… By the third century CE all major human societies were centralized information networks lacking strong self-correcting mechanisms. Small democracies remained, but not large-scale networks.

P141, Caesar for President! So why do democracies fail? Consider the Roman empire. A key is that democracy isn’t only about elections. It’s more about having conversations. Over long distances you need some kind of information technology. And people need to understand things they haven’t experienced first-hand. That requires education and media. These became more difficult as towns got bigger. The empire got so big democracy wasn’t workable. Examples from Pompeii show how campaigning worked even then.

P146, Mass Media Makes Mass Democracy Possible. Beginning with the printing press. Examples of polities in the 1500s. Rights were limited to wealthy men. Various rights. But they had problems. Periodic pamphlets became newspapers. They can issue corrections in a day. Especially in the Netherlands. Debates between big and small government emerged, 149b. Readership was fairly light; democracy was limited to wealthy white men. In 1824 only 7% voted. Still, strong self-correcting mechanisms existed. Checks and balances. Including the free press.

P152, The Twentieth Century: Mass Democracy, but also mass totalitarianism. Then came the telegraph, telephone, TV, radio, trains and planes… By Lincoln’s time, his speech was in print the next day in many cities. A century later, people were connected in real time. By 1960 some 64% of adults voted. But mass media also enabled totalitarian regimes.

P154, A Brief History of Totalitarianism. Such systems assume their own infallibility, and seek total control of people’s lives. The latter was impossible before modern information technology. Unlike totalitarian networks, autocratic networks have technical limits. Example of the Romans, and Nero. Modern regimes like Stalinist USSR operate on a different scale. The Roman emperors and generals were usually killed by their subordinates.

P156, Sparta and Qin. Sparta came close to being totalitarian and actually included some self-correcting mechanism. Details. More ambitious was the Qin dynasty in China, 221-206 bce. It tried to dismantle regional powers and standardized things, built a road network, and everything was intended to serve a military need. Social order was militarized. Males were given ranks. At least they were ambitious. The official doctrine was Legalism, 158b. [[ Rather how conservatives think people are essentially bad ]] And suppressed more benign philosophies. Certain books were confiscated. But its scale and intensity were its ruin. People became resentful, resources were lacking. Revolts broke out in 209 bce. The empire splintered into 18 kingdoms. After several years the Han dynasty reunited the empire, and turned to Confucian ideas. …

P160, The Totalitarian Trinity. Modern technology made large-scale totalitarianism possible. Summary about Bolsheviks, Marx, belief in their infallibility. They created a one-party state, based on what Marx taught. Stalin inherited this. His network had three branches: state ministries and the Red Army, etc; the Communist Party; and the secret police. They all spied on one another. The secret police were as powerful in their way as the Red Army. Similarly the SS in Nazi Germany. The secret police commanded information. The Great Terror of the late 1930s saw the secret police purging and killing the other branches. Stalin’s self-correcting mechanisms were a self-terrorizing apparatus.

P164, Total Control. Totalitarian regimes are suspicious of people who gather to exchange information. Example from Hitler. All local clubs came under Nazi administration. Jews were banned. Stalin was even harsher. Card catalogs on everyone and everything. Farms were collectivized. All families would have to join. Moscow would decide what crops they would grow, and who would do each job. Villagers wanted none of it. Predictions failed; harvests shrunk. Millions died. …

P167, The Kulaks. Delving a little deeper… Just as with the witch craze, authorities invented a category of nonexistent enemies. Kulaks, or capitalist farmers. They were greedy and selfish, because they had more of something than everybody else. Stalin wanted them all liquidated, using secret police. Deported or executed. Their aim was a million in two years. Target numbers were identified in each region. They were identified almost randomly. [[ Sounds a lot like ICE ]] Example. Some assigned hard labor. Children were branded forever. Enemies of the people. Kulaks were a new intersubjective Soviet truth.

P171, One Big Happy Soviet Family. Stalin set out to dismantle the family itself. Children would be taught to worship Stalin as their real father, and inform on their parents if necessary. Example of Pavlik Morozov. Who got his father murdered, and eventually became a martyr. Other examples.

P173, Party and Church. Were the Nazis and Communists much different from the Christian churches? For one, the churches were usually at odds with the state. Also, churches were tradition-oriented; the totalitarian parties were revolutionary. Examples of icons and Byzantium emperors. Churches had as little contact with each other as anyone. They were autonomous, with local traditions. Popes only became so powerful in the modern era.

P176, How Information Flows. So: democracy has many independent hubs for information to flow through; totalitarianism wants all information to pass through one central hub. The latter can make decisions quickly. Otoh, if official channels are blocked, there are no others. Sometimes subordinates block bad news from their superiors. Also, such regimes’ aim is to preserve order, not discover truth. Example Chernobyl. Soviets suppressed the news as long as they could. In democratic countries the media will report news that the government suppresses. Three Mile Island.

P179, Nobody’s Perfect. Further, since they believe themselves infallible, the networks have weak self-correcting agencies. Leaders will refuse to admit mistakes, blaming foreigners or traitors. [[ Like Trump ]] Example Lysenkoism. Example accidents in factories and lack of safety measures. Moscow blamed international saboteurs. Example Pavel Rychagov. Stalin was the real saboteur, and made diplomatic errors. He didn’t deal with Germany, until Germany invaded Soviet territories. Scholars blame the psychological costs of Stalinism. And so on. After victory in 1945 Stalin launched another wave of terror, concocting an American-Zionist conspiracy among doctors. Stalin’s care when he had a stroke was delayed for this very reason. It’s not that Stalin’s system was dysfunctional. Other countries made errors too. On its own terms, Stalinism was one of the most successful political systems ever devised. 184b. And many in the 1940s and 50s thought it was the wave of the future.

P185, The Technological Pendulum. Now we can understand why democracy and totalitarianism flourish in certain eras and are absent in others. It’s because of revolutions in information technologies. Totalitarian regimes face ossification; democracies face fracturing. Consider the 1960s. As Western democracies relaxed, marginalized groups spoke up, destabilizing the old order. Reactionaries switched from words to guns. Examples. Were those societies falling apart? Meanwhile regimes behind the Iron Curtain stifled all those groups, and centralized power. Twenty years later all that caught up with the Soviets, and the system collapsed. It’s centralized economy was slow to react. Example of semiconductor industry. In the West there was competition; but not in the Soviet Union, where technology was a secret, top-down affair. The Soviets resorted to stealing and copying western technology, but that just left them behind. Did that mean that democracy won over totalitarianism? 189t. No, because the internet, social media, AI etc are again straining the ability to maintain social order in democracies. And perhaps AIs can concentrate all information in one hub. It’s all about the struggle between order and truth. The new information networks may work without human intervention. A new Silicon Curtain, separating humans from algorithmic overlords. That what the rest of the book is about.

[[ This fits very nice with the news this week (in 2025) of making sure AIs are ‘woke’. How would they even do that? ]]

Part II: The Inorganic Network

Ch6, The New Members: How Computers Are Different from Printing Presses. P193

So what’s driving the current information revolution? The seed is the computer. Ever since the 1940s. A computer can potentially make decisions by itself, and create new ideas by itself. Such ideas go back to Turing in 1948, and Kubrick and Clarke in 1968. Earlier media couldn’t make decisions. Today, social media algorithms can spread hate. Example Myanmar 2016-7. Inspired by fake news on Facebook. Against the Rohingya. Buddhists feared being ‘replaced’ even though they were 90% of the population. But why blame Facebook? It did apologize. It’s because of the algorithms. They make decisions by themselves. Recall how the Bible was born as a recommendation list, p198m (by selecting some books but not others to be included). Facebook auto-played hateful videos, until 2020. The goal of the algorithms was to maximize “user engagement.” And outrage generated engagement. By the 2020s the algorithms created fake news and conspiracy theories themselves. The AIs are powerful new agents.

Some object that AIs, not being conscious, cannot make decisions. But intelligence is not consciousness. 201m. And so on. Another example, concerning GPT-4 and CAPTCHA puzzles. Etc, about “goals” and decisions.

P205, Links in the Chain. Before computers, all chains of information networks were linked by humans. No document created another. But computers can respond to each other. Computers are members of the information networks themselves. Potentially computers know more about the economy and law than most people, and make decisions on them before people notice.

P207, Hacking the Operating System of Human Civilization. Computers turned out to do much more than compute numbers. They can create stories that exploit the weaknesses and biases of the human mind. Before AI, e.g., there was Q, and QAnon. Far-reaching effects. Some politicians endorsed the claims. By 2024 AIs could create and post similar anonymous messages. This could undermine democracy. Example of a Google engineer who became convinced his chatbot was conscious. Example of person who broke into Windsor Castle with a crossbow to assassinate the queen, persuaded by a chatbot. Just ask your oracle what to do. This could be the end of human history. Humans will become more influenced by what computers generate. Science fiction mostly focuses on physical threats from intelligent machines. Now the machines manipulate humans to pull the trigger. Humans have been influenced by stories for thousands of years. Now, we can no longer be sure who is telling the stories.

P214, What are the implications? New information networks will emerge, such as computer-to-human networks. Facebook and TikTok. And computer-to-computer chains. The pace of change will accelerate; we can’t imagine. 216-7. There are now many more terms than just ‘computer.’ While ‘software’ and ‘AI’ mean somewhat different things. And AI might mean ‘alien intelligence.’ A different intelligence from human. Other terms: robot, and bot. And author refers to ‘network’ in the singular.

P218, Taking Responsibility. This network will create new political structures, economic models, and cultural norms. While humans still are in control, we have the power to shape these new realities. Corporations tend to shift responsibility to the customers. But their arguments are naïve or disingenuous. At the same time they’re fighting regulations that would apply to them. And customers and voters don’t actually know how they’re being manipulated. And things are getting more complicated all the time. Especially in finance. Cryptocurrencies. Taxation; how should it work when economic activity occurs outside any state? The word ‘nexus’ has a specific meaning in tax literature. Other questions remain open. … Taxes may need to apply to information, not just money. Perhaps a social credit system. What would political parties think about this?

P224, Right and Left. What are their policies on AI? They could go either way, 224b. Neither party has thought much about it. The engineers who do understand it usually use the system to make money. Exception is Audrey Tang, in Taiwan. More typical are Zuckerberg and Musk. The following chapters explore such issues.

P226, No Determinism. It’s important to remember these issues are not deterministic. Contrast IBM and Soviet emphasis on large central machines, while hobbyists developed personal computers. Jobs and Wozniak created the Apple. If McCarthyism had killed 1960s counterculture, would we have personal computers today? Other examples of time and place. Human choices also affect how tools are used. How radios were used differently in East Germany vs West Germany. We need to understand 21st technologies to make similar decisions. Examples of climate change, how projections depend on different models run on different computers. We need to understand how computers make decisions and create ideas…

Ch7, Relentless: The Network Is Always On, p230

We have always been monitored, by other humans, and by animals. Bureaucracies want to monitor everyone, for their own ends. Such monitoring is necessary for providing services too. Past systems had legal or technological limitations. Example of Iosifescu in Romania. The leader, Ceausescu, would have monitored everyone if he could. Handwriting samples from everyone. Spying on the spies. People paid to inform on their neighbors. But then how to analyze millions of reports? They manage to inspire fear in everyone that they were being watched.

P234, Sleepless Agents. Soon our computers *will* be able to track everyone all day—our smartphones do it. And these computers analyze the data themselves. And ‘read’ far faster than humans. And far surpass humans in their ability to spot patterns in data. And make decisions, e.g. is he a suspected terrorist? Such pattern recognition can also be used to identify corrupt officials, tax cheaters, etc. We need to balance benefits against dangers. For one thing, digital bureaucrats are “on” all the time.

P238, Under-the-Skin Surveillance. They can also monitor our own bodies. Eye movements. Personality traits. Preferences. Dictators could use such data to target people they don’t like. Neuralink. There are many difficulties with these. There are already conspiracy theories about computer chips planted in our brains. People should worry about their smartphones instead.

P241, The End of Privacy. New surveillance technologies could bring about the end of privacy. Not just in “states of exception” but in London and New York. CCTV cameras, facial recognition, etc. Every physical activity leaves a data trace. This is how the Jan. 6th rioters were tracked down. Examples. Facial recognition in China. Kidnapped and missing children. Football hooligans in Denmark. Obviously there are privacy concerns. Iran’s hijab laws. One woman arrested for violating it died in police custody, in 2022, leading to protests. The surveillance was ramped up. SMS warnings to women driving without their scarves, leading to confiscation of vehicles. And so on.

P248, Varieties of Surveillance. Partners can monitor their spouses. Stalker technology. Employers surveil their employees. Vehicles monitor drivers. Tripadvisor. Customers review restaurants. Guests can be ranked too. A degree of privacy is lost. And the customer can be a tyrant, with the power to make or break lives.

P250, The Social Credit System. This system gives people points for absolutely everything. The idea goes back to Mesopotamia. Analogous to money. Money can’t buy everything; so we have honor, status, and reputation. The latter can’t be counted, but this is a feature. The two are somehow combined by Tripadvisor. Social credit covers everything that money doesn’t. The Chinese government has such a system. Some complain it turns life into a never-ending job interview. Yet people are always judged against social norms. We knew that various spheres of activity were independent. Not much any more. Increasing stress.

P254, Always On. Humans have always lived in cycles… But computers are always on. Humans will have to adapt. Or, we must design the network to give us time off. Unless the network creates a new kind of world order and imposes it on us.

Ch8, Fallible: The Network Is Often Wrong, p256

Solzhenitsyn and the Soviet labor camps, where people who criticized Stalin were sent. S spent 8 years there. He recalled an event where the audience applauded and were afraid to stop. The first one who stopped was sent to the gulag. Everyone knew they were being watched. As a loyalty test it didn’t discover any kind of truth, but it did impose order. For decades. Even a new kind of human – Homo sovieticus, service and cynical and passive.

P258, The Dictatorship of the Like. Analogously, social media rewards some kinds of behavior and punishes others. How YouTube discovered that outrage drives engagement, while moderation does not. One result was the rise of Bolsonaro in Brazil. Other examples, via conspiracy theories about schoolteachers brainwashing children. The dictatorship of the like. …

P261, Blame the Humans. We’ve reached the point where major historical events are partly caused by the decisions of nonhuman intelligences. And these networks are not infallible. Yet the tech giants shift blame from the algorithms to “human nature.” [[ That is, the AIs play to the biases of human nature ]] They claim to be striking a balance. Yet they’re aware of their encouraging extremist groups, all the way back to 2016 and 2019. How outrage and misinformation were more likely to be viral. Their only concern was engagement. Any potential damage was ignored. And so the new system encouraged base instincts. The companies relied on the naïve view of information – truth would prevail. It didn’t happen. They had no self-correcting mechanisms. When warned about potential problems, they took no action. They developed error-enhancing mechanisms! Example in Myanmar.

P266, The Alignment Problem. The tech giants claim they’re working to be more ‘socially responsible’ but what does that mean? They can do it if they wanted; they mostly solved the email spam problem. And social media certainly has many benefits. Author met his husband online in 2002. But the larger issue is the ‘alignment problem’. The computer’s goals aren’t necessarily what the human intended. It’s a problem that goes way back. Clausewitz and his book On War. Including the idea ‘war is the continuation of policy by other means.’ Otherwise there’s no point in going to war, for war’s sake. Napoleon achieved victories, but no lasting political achievements. If anything, he led France into decline, and encouraged Germany and Italy to unite. Another example: the American invasion of Iraq in 2003. It helped only Iran. Their notion of ‘maximize victory’ was as pointless as ‘maximize user engagement.’ Like tactics vs strategy. The misalignment is irrationality. A problem with bureaucracies. Still, the problem is worse with computers.

P271, The Paper-Clip Napoleon. One reason it’s worse with computers is that a computer network can be more powerful than any human bureaucracy. Bostrom’s 2014 book Superintelligence. His paperclip example. Not unlike social media algorithms. Another problem is that computers are likely to adopt strategies that would never occur to humans. Example of an AI to play computer games. And another problem is if humans give them a misaligned goal, they are apt not to notice.

P274, The Corsican Connection. How to fix this? [[ Asimov’s laws ! ]] How do we avoid setting the wrong goal? That was a flaw with Clausewitz: how do we rationally set a goal? Consider Napoleon. What are the higher goals? I.e. how to choose among these options? They all involve intersubjective inventions. Napoleon didn’t even consider himself French. There’s no rational way to define an ultimate goal. Tech executives need to understand this.

P277, The Kantian Nazi. For millennia philosophers have struggled how to define an ultimate goal. Two potential solutions: deontology and utilitarianism. The first concerns universal moral duties or rules that apply to everyone. What would ‘intrinsic goodness’ mean? Kant suggested anything that could be universal. E.g. the golden rule. And what is ‘universal’? Throughout history people make many excuses to kill other people. The others are subhuman. Jews aren’t humans, etc. Well, we should use the most universal definition we can, e.g. for ‘human.’ Why not ‘organism’? Many or most conflicts in history are about identities. In-group and out-group, which are myth based. Anyway these deontological rules would be difficult to apply to a computer. It doesn’t have the same qualms as a human. One rule might be, they must care about any entity capable of suffering. But let’s look at the other options.

P281, The Calculus of Suffering. Jeremy Bentham suggested that the ultimate goal should be to minimize suffering and maximize happiness. This is popular in Silicon valley, and the effective altruism movement. The problem is we have no measurements of suffering. Yet many cases are clear, e.g. the Holocaust. And homosexuality, a ‘victimless crime’, justified by some on deontological grounds. As did Kant. Bentham took the opposite side. But in situations where suffering is more evenly matched, utilitarianism falters. Covid: saving lives vs social isolation, which might have cost lives. How to calculate the misery index on each side? Other situations. In these cases they resort to deontological rules. Examples 284. Future benefits vs short-term damage. Future salvation, the trick of religions.

P284, Computer Mythology. Throughout history, bureaucratic systems have set goals via mythology. This is where the alignment problem resides. Computers too can create inter-computer realities. This is another central argument of the book. An example is when several computers are linked so users can wander around the same virtual landscape. Pokemon Go is one, in which users ‘see’ objects around the neighborhood that aren’t really there. Other users can see the same objects. Consider the ranks Google gives airlines or restaurants. The rank itself is an inter-computer reality. People use tricks to manipulate the ranks. Consider how holy many people see the city of Jerusalem. Not just a rock, but its ‘sanctity.’ In finances, crypto-currencies are an example. Computers might invent new tools that trigger new crises. If computers learn to do these things, they could be more useful. …

P289, The New Witches. Earlier, we’ve seen how ideas of witches, and kulaks, were invented from mountains of information. In recent centuries, racist mythologies. Categories. 290m. In the 19th century racism pretended to be an exact science. It was all myth. Computers might create such mythologies too. E.g. credit systems, and ‘low-credit people.’ Consider social credit systems and traditional religions. 291b:

Religions like Judaism, Christianity, and Islam have always imagined that somewhere above the clouds there is an all-seeing eye that gives or deducts points for everything we do and that our eternal fate depends on the score we accumulate. … In practical terms, this meant that sinfulness and sainthood were intersubjective phenomena whose very definition depended on public opinion.

With example of how it might work in an Iranian regime.

P292, Computer Bias. Can we avoid ideology by leaving everything to computers? No; they often have biases of their own. E.g., a chatbot trained on Twitter. Facial recognition that worked for white males but not black females. Biases came from the data the AIs were trained on. Reviewing the history of algorithms. Almost by definition, AI learns by interacting with its environment. AI begins life as a ‘baby algorithm.’ They need not just exposure to data, but also a goal. Sets of data often have built-in biases. Example of Amazon’s hiring rules. AIs can simply perpetuate existing biases. And it’s very difficult to ‘untrain’ it. Or start over. But where to find unbiased data? Computers think they discover truths about humans, when in fact they’re just imposing a new order on them. Humans need to realize they are unleashing new kinds of independent agents, even new kinds of gods.

P298, The New Gods? Book by Meghan O’Gieblyn about computers and traditional mythologies. In contrast to holy books, computers can adapt themselves. That doesn’t mean computers are holy books. They’re fallible. If they fail to find the right balance between truth and order, catastrophe could result. Example of social credit systems that divide humans into bogus categories. Mythologies need self-correcting mechanisms, as the US Constitution does. Perhaps we teach computers that they can make mistakes. And keep humans in the loop. It’s a political challenge. Next: how democracy and totalitarianism might deal with computer networks.

Part III: Computer Politics

Ch9, Democracies: Can We Still Hold a Conversation? P305

Civilizations are born from the marriage of bureaucracy and mythology. Computer networks will amplify both. The are great potential benefits, but also the potential for the end of human civilization. True, such worries in the past have not come true. Still, lots of trials and tribulations are in store. It takes time for humans to learn a new technology. Example of the Industrial Revolution. It led to the rise of modern imperialism. Colonies were necessary, it was argued, to provide all the resources civilization needed. Many countries set out to build them; it took a century to see it wasn’t a good idea. Other costly experiments were Stalinism and Nazism, which entailed the industrial murder of millions. Competition from liberal democracies led to two world wars and the cold war. The IR also upset the global ecology. We have yet to build a sustainable industrial society. Aside from that, the era of conquests and genocides seems to be over. But we still need to manage AI.

P309, The Democratic Way. It seemed as if liberal democracy was the better way to go. They possess strong self-correcting mechanisms. But will it survive? One threat could be the annihilation of privacy, and the enablement of totalitarianism. That doesn’t make it inevitable; Canada and Denmark haven’t. Technology isn’t a binary matter. Many books have been written about how democracies can survive in the digital age. Author wants to keep things simple. There are some fundamental principles to follow. First is benevolence. An example is the trust factor in health care. With lawyers, etc. A fiduciary duty. Extend this to the algortihms, which now serve us for free. Pay for services with money rather than information. Another model is education, which many governments provide for free. Second, decentralization. Don’t store all the data in one place, or mix health data with police records, e.g. Some inefficiency is a feature, not a bug. Multiple databases provide self-correcting mechanisms.

Third, mutuality. If democracies monitor their citizens, they should be open about their own operations. We should know as much about the government and corporations as they do about us. Transparency and accountability.

Fourth, there must be room for both change and rest. Resist fixed orders of things, like caste and race, and don’t think that humans are capable of limitless change, as Stalin did, to cite opposite ends of the spectrum. Examples of health care predictions. Bodies are constantly changing. Better to recommend changes to avoid illnesses. Without being a tyrant about it.

P316, The Pace of Democracy. Beware sudden destabilizations that would undermine democracy, e.g. financial crisis and depression. Example Weimar Germany. AI could disrupt job markets. Old jobs are automated and new jobs appear, all the time. Farmers, factory jobs, service jobs. Some intellectual tasks are more easily automated than manual tasks like dish washing. Doctors might be more easily automated than nurses. And computers can be more creative than humans. Computers might do emotional intelligence better; emotions are patterns too. And they have no emotions of their own. Example with ChatGPT in 2023. Another example with medical advice. What about priests? For some roles we want conscious entities, not robots. Yet AI might learn emotions, and be granted rights and liberties as “legal persons.” So future employment will be very volatile.

P322, The Conservative Suicide. In recent decades progressives would see problems and say “It’s such a mess, but we know how to fix it. Let us try.” While conservatives said “It’s a mess, but it still functions. Leave it alone. If you try to fix it, you’ll only make things worse.” Put another way, conservatives are cautious, given that social reality is complicated and people aren’t very good at understanding the world and predicting the future. So conservatism is more about pace than policy. Yet now conservative parties have been hijacked by unconservative leaders, like Trump, and turned into radical revolutionary parties. Now the Republican party, instead of conserving existing institutions, is highly suspicious of them. 324 examples. Now it’s Democrats trying to preserve established institutions. Nobody knows for sure why this is happening. Yet this didn’t happen in the 1930s and 50s. Flexibility will be the most important human skill in the 21st century. And democracies are more flexible than totalitarian regimes.

P326, Unfathomable. But for self-correcting mechanisms to work, what they correct can’t be hidden and unfathomable. This will be more difficult in the computer age. Human systems reflect human biases and flaws. Example of Brown vs Board of Education. (Biological dramas: Us vs Them, and Purity vs Pollution. 328.0) We understand why these bio-dramas exist, and we understand why they’re flawed. And so the 14th amendment and Brown v Board were corrections. But computers might repeat these kinds of errors, with their own biases and inter-computer mythologies. Example of a 2013 case involving a legal algorithm.

P331, The Right to an Explanation. Computers make more and more decisions about us. Should the right to an explanation be a new human right? Example of a program that plays go, called AlphaGo. Move 37, something no human had ever anticipated. The computer went where no human had ever gone. [[ analogous to speculation about alien intelligence ]] Nor could it be ‘explained.’ Such an intelligence undermines democracy. No one understands how these complicated things work.

This may be why many are attracted to charismatic leaders and conspiracy theories. Yet charismatic leaders don’t understand either. People want a single cause for everything, 334.7. Algorithms factor many things together. Full explanations would be absurdly detailed, e.g. 335. So having a right to an explanation may not help much. … Some algorithms might be vetted with others…

P338, Nosedive. But if people have trouble with bureaucracies (because they’re not biological dramas) they’ll have even more trouble with algorithms. P338-9:

It has always been difficult for humans to understand bureaucracy, because bureaucracies have deviated from the script of the biological dramas, and most artists have lacked the will or the ability to depict bureaucratic dramas. For example, novels, movies, and TV series about twenty-first-century politics tend to focus on the feuds and love affairs of a few powerful families, as if present-day states were governed in the same way as ancient tribes and kingdoms. … To survive, democracies require not just dedicated bureaucratic institutions that can scrutinize these new structures but also artists who can explain the new structures in accessible and entertaining ways.

With the example of “Nosedrive” episode of the series Black Mirror.

[[ Cue science fiction, which is dismissed by some precisely because it is concerned with other things besides “biological dramas.” Maybe the emergence of sf in 20th century can be seen as a reaction to an increasingly complex society. ]]

And about social credit systems. Which is why there has been a proposal to prohibit them, 340t.

P340, Digital Anarchy. A final threat is digital anarchy. More difficulty to ensure order. The network makes it easier to join the debate. There are no gatekeepers, like editors. More groups and factions are part of the conversation, but that requires adjusting the terms of the debate. And then there are bots, which generate millions of tweets. And now the bots can generate new ideas, including inaccurate ones. 342.2. These bots can build friendships with people and change people’s minds. If we’re flooded with computer -generated fake news, no consensus will remain about basic rules of discussions, or basic facts. 343.5.

P343, Ban the Bots. So we should regulate AI to prevent the infosphere from fake news. Dennett suggests this be done like regulations in the money market, to protect against counterfeit money. Protect against counterfeit humans. Bots don’t have rights. Also, ban unsupervised algorithms from curating public debates. Stop algorithms from spreading outrage. E.g. videos that aren’t aligned with a company’s agenda. [[ but this is already being done. ]] These are things democracies must do. In contrast to that naïve view of information… [[ So why are Republicans *against* any regulation of AI… ? ]]

P345, The Future of Democracy. Now democracy is threatened because information technology is too sophisticated. Already they’re breaking down, e.g. how parties in the US can’t agree on basic facts. There were conflicts in the 60s, but we still agreed on who won elections. What is driving people apart? Many say social media algorithms. Is there something else? We can’t even agree on the reasons. What about totalitarian regimes..?

Ch10, Totalitarianism: All Power to the Algorithms?, p348

Smry so far. Volume is an advantage in some industries, but not others. You can compete with your local McDonalds, but not with Google. Would this advantage a totalitarian regime? Blockchain might promote democracy, (decisions require approval of 51% of users) but the problem is what a “user” is. A government can control 51% of users. With that it can change the past, expunge the memory of people it doesn’t like. Stalin tried to delete Trotsky. These things would be far easier with blockchain.

P351, The Bot Prison. But there are problems. Dictators lack experience with inorganic agents. You can’t terrorize them. There’s an alignment problem. Totalitarian information networks often rely on doublespeak; Russia claims to be a democracy; the Ukraine war is not a war. Computers don’t understand doublespeak. Humans avoid pointing out contradictions, but computers wouldn’t. Questions lead to trouble, so humans don’t question. How would a chatbot learn if it didn’t ask questions? Also, humans change their minds and ‘forget’ earlier positions. How would a chatbot do that? Democracies would have similar problems, but not as many.

P354, Algorithmic Takeover. Or, algorithms might take control. Hypothetical example of a security algorithm warning Great Leader of an assassination plot. To hack the entire system, the algorithm need only manipulate a single individual. Example of Tiberus and Sejanus.

P358, The Dictator’s Dilemma. Since totalitarian regimes believe their leaders are infallible, they might expect AI to be infallible. And so would have no self-correcting mechanisms. The risk is that the leaders become puppets of the AI. SF is full of such plots, usually set in democracies. This recalls the Russell-Einstein Manifesto in 1955.

Ch11, The Silicon Curtain: Global Empire or Global Split, p361

But we live in an interconnected world. And humanity has never been united. AIs could launch missiles or start pandemics. They could spread fake news to undermine the social order. Even if most societies regulate AI, if only a few do not, the danger remains. Just as with climate change. So we need to understand how AI might change relations between societies at a global level. There are now just two superpowers, but even tiny nations can manipulate them to extract concessions. We’re in a post-imperial era. Two particular dangers with AI: it could create a new imperial era; or humanity could split along a Silicon Curtain. Either could have devastating consequences.