- The ‘No Kings’ demonstrations yesterday were a huge success, the parade in Washington DC less so;

- How the White House lies about those demonstrations;

- How MAGA and Musk are somehow blaming the *left* for the shootings of Democratic lawmakers in Minnesota;

- With thoughts about the upside-down world of MAGA conservatives.

As widely covered in the new media, hundreds of “No Kings” demonstrations were held across the country yesterday, Saturday.

A gallery of photos.

Salon, Alan Taylor, 15 Jun 2025: Photos: ‘No Kings’ Protests Across America, subtitled “Yesterday, according to estimates by event organizers, millions marched in protest against the Trump administration, including its recent controversial immigration-enforcement raids. Hundreds of ‘No Kings’ demonstrations took place in cities and towns throughout the U.S.”

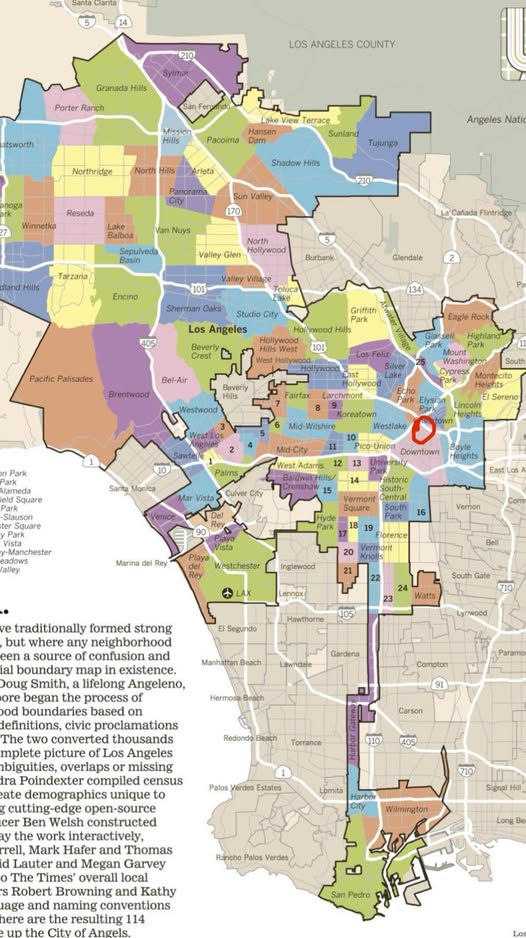

San Francisco (above), Des Moines, Ocean Beach in San Francisco, Chicago twice, Cincinnati, Boise, Asheville, Charlotte, near Mar-a-Lago, Boston twice, Jackson, Nashville twice, St. Paul, Los Angeles twice, San Francisco again, Los Angeles again, New York City (with Mark Ruffalo and Susan Sarandon), Philadelphia, Atlanta, Louisville, Philadelphia again, and Portland OR.

\\

So naturally, in the spirit of “alternative facts” and the size of Trump’s 2017 inauguration crowd, Trump’s spokesman Steven Cheung denied the evident facts, and misrepresented, or misunderstood, what those demonstrations were all about.

JMG, 15 Jun 2025: WH Declares “No Kings Day” Protests To Have Been “Complete Utter Failure With Minuscule Attendance”

The JMG post displays White House Communications Director Steven Cheung, and then quotes CBS News:

Demonstrators crowded into streets, parks and plazas across the U.S. on Saturday to protest President Trump, marching through downtowns and blaring anti-authoritarian chants mixed with support for protecting democracy and immigrant rights.

Organizers of the “No Kings” demonstrations said millions had marched in hundreds of events. Governors across the U.S. had urged calm and vowed no tolerance for violence, while some mobilized the National Guard ahead of marchers gathering. Confrontations were isolated.

Huge, boisterous crowds marched in New York, Denver, Chicago, Houston and Los Angeles, some behind “no kings” banners. Thousands of protesters across L.A. — where demonstrations have occurred in the past week over immigration raids — were largely peaceful throughout the day.

\\

As for “minuscule attendance”…

Attendance at the parade was far smaller than anticipated. Sure, sure, the threat of rain may have kept some away. But one of the photos I saw was of Trump, looking very perturbed. Apparently the parade didn’t meet his expectations.

\\\

Meanwhile, without any evidence whatsoever:

JMG, 14 Jun 2025: Musk Blames “Violent Far-Left” For Minnesota Shooting

from

The Daily Beast, 15 Jun 2025: Musk Slammed for Calling Anti-Abortion Dem Killer ‘Far Left’, subtitled “The suspect in the murder of a Minnesota lawmaker reportedly had a target list that included Democrats like Governor Tim Walz and Rep. Ilhan Omar.”

Elon Musk is getting dragged online after he tried to blame the “far left” for the murder of a Democratic Minnesota lawmaker—a claim even his own AI chatbot debunked.

“The far left is murderously violent,” Musk wrote on X, reposting a user who claimed that multiple recent high-profile killings—including Saturday’s assassination of Democratic State Rep. Melissa Hortman and her husband—had been perpetrated by “the left.”

However, early evidence suggests the suspected gunman in Hortman’s killing, identified as Vance Luther Boelter, was actually targeting figures who are frequently branded as belonging to the “far left” by detractors.

\

Again and again, it strikes me that conservatives, including MAGA, live in an upside-down world in which evidence is frangible and stories and ideologies prevail. And they are constantly accusing their other side of the tactics that they themselves use. Because it’s never about verifiable facts. It’s about who can make the biggest social media splash. Also, as discussed a post or two ago, there is so much information in the world that most people simply can’t keep up, to assess a balanced understanding; and so most people restrict their sources to those that support their worldview. In the examples in this post, some people will believe Steven Cheung, and Donald Trump, because they don’t look at any other news sources and so don’t know any better. They will never hear about the extent of the ‘No Kings’ demonstrations because Fox News and Daily Wire and NewsMax will downplay them and other right-wing sites will ignore or deny them, as Cheung has done. They’ve become committed to those sources, and couldn’t think of doubting them now.

We are living in a set of parallel realities. The one that most aligns with actual reality will eventually prevail.